Numercial Analysis

About Error

-

Definition

$$\begin{align*} \text{When } &\alpha = 1 \text{, it is called linear convergent} \\ &\alpha = 2 \text{, it is called quadratically convergent} \end{align*}$$

$\text{If } \{x_n\}_{n=1}^{\infty} \text{ to } X^*, \text{we say } \{x_n\}_{n=1}^{\infty} \text{ converges to } X^* \text{ of order } \alpha \text{ with asymptotic error } \lambda $

$\text{of } \lim_{n \rightarrow \infty} \frac{x_{n+1} - X^*}{x_n - X^*} = \lambda$. -

absolute error : $|P - P^{*}|$ , if $P$ is an approximate for $P^{*}$.

-

relative error : $\frac{|P - P^{*}|}{|P^{*}|}$, if $|P^{*}| \neq 0$

Ch2 Solution of Equation in one variable

Bisection Method

Necessary Condition: $f$ is a function $\in C(a ,b)$ and $f(a) f(b) < 0$

Algorithms

Given $f(x), f(a), f(b)$ with tolerance $\epsilon_1$, $\epsilon_2$

def bisection(f ,a ,b,tolerance):

if f(a) * f(b) > 0:

return None, None

if a > b:

a ,b = b ,a

mid = (a+b)/2

iteration = 0

error = [(b-a)/2]

while (abs(f(mid)) > tolerance and b - a > tolerance):

mid = (a + b)/2

if f(mid) == 0:

return mid, None

elif f(mid)*f(a) > 0:

a = mid

else:

b = mid

iteration += 1

error.append((b-a)/2)

ratio = error[iteration]/error[iteration-1]

print(f"x_{iteration}: {mid:.6f} ,error: {error[iteration]:.6f} ,e_{iteration}/e_{iteration-1}: {ratio:.6f}")

return (a+b)/2 ,(b-a)/2

def f(x):

return x**3

res, err = bisection(f ,-100.565664, 333.5456,1e-10)

print(f"root find:{res}, with error: {err}")

Theorem

Suppose f $\in C[a,b]$ and $f(a)$, $f(b)$ < 0. The bisection method generates a sequence $\{P_n\}_{n = 1}^{\infty}$ for approxmating a zero $P^{*}$ of $f(P^*) = 0 $ with $|P_n - P^*| \le \frac{b-a}{2^n}$,$\forall n > 1$

- proof

For any $n \ge 1$, $\exists subinternals:\ [a_n, b_n] of [a ,b]$ s.t $b_n - a_n = \frac{1}{2^n} (b-a)$ and $P^* \in (a_n,b_n)$

$$P_n = \frac{1}{2} (a_n + b_n)$$

$$\therefore |P_n - P^*| \le \frac{1}{2} (b_n - a_n) = \frac{1}{2} \cdot \frac{1}{2^{n-1}} (b-a) = \frac{1}{2^n} (b-a)$$ Hence $\{P_n\}$ converges to $P^*$ with rate $O(\frac{1}{2^n})$

- Note

For a function f with accuraccy $\epsilon$ in interval $[a ,b]$, how many steps required? $|P_n - P^*| \le \frac{1}{2^n} (b-a) < \epsilon$, n is the step required

Fix point

Definition

A number $X^*$ is called fixed point of a function $g$ iff $X^* = g(X^*)$

Theorem : Uniqueness and existence of fixed point

- if $g \in C[a,b]$ and $g(x) \in [a ,b]$,then $\exists X^{*} \in [a ,b]$

- if $g'(x)$ exists on $[a ,b]$ and $|g'(x)| \le k < 1$, $\forall x \in (a ,b)$, then $\exists ! X^* \in [a ,b]$

-

Explanation

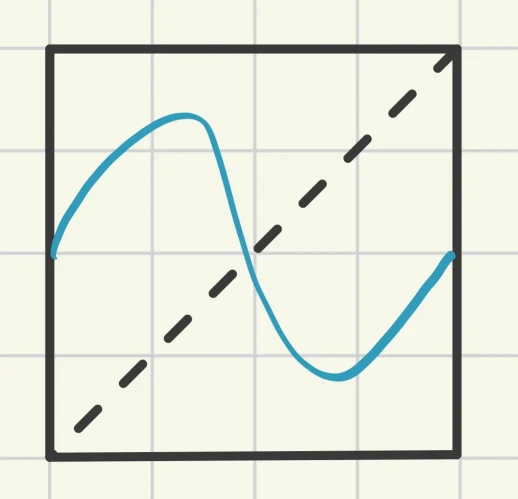

- Imagine there is square with the range $x \in [a ,b]$, $y \in [a, b]$. Then, $g(x)$ is a continuous curve that extending from $x = a$ to $x = b$. Because the value of $g(x)$ is always between $[a,b]$ (bounded by the upper and lower boundaries), this line must cross the diagonal $y = x$ at least once. That intersection point is the fixed point $X^*$.

- $|g′(x)|$ is the slope of the function. The restriction $|g′(x)| \le k < 1$ means that the function’s change is relatively “gradual,” even flatter than the diagonal $y = x$ (with a slope of 1).If you cross the diagonal once and want to cross it again, your slope must become steeper than 1 to “catch up” with the diagonal. Since the slope is restricted to below 1, once the function crosses the diagonal, it can never return.

-

proof

- Existence

- Case 1: $g(a) = a$ or $g(b) = b$

$g(x)$ has fixed point. - Case 2: $g(a) > a$ and $g(b) < b$

$h(x) := g(x) - x$, is continuous on $[a,b]$ and $h(a) > 0$,$h(b) < 0$. By Intermediate Value Theorem, $\exists X^* \in (a ,b)\ s.t.\ h(X^*) = 0$, i.e. $g(X^*) = X^*$ Hence, the fixed point exists.

- Case 1: $g(a) = a$ or $g(b) = b$

- Uniqueness

Suppose $\exists \ y^* ,y^* \neq X^*\ s.t.\ g(y^*) = y^*$, By the Mean Value Theorem,$\exists \ \xi \in R ,\ \xi \in (X^*, y^*) \text{ s.t. } g'(\xi) = \frac{g(X^*) - g(y^*)}{X^* - y^*} $ $$\begin{align*} \therefore |g(X^*) - g(y^*)| &= |g'(\xi)||X^* - y^*| \\ &\Rightarrow |X^* - y^*| = |g'(\xi)||X^* - y^*| \\ &\Rightarrow |g'(\xi)| = 1\quad (\rightarrow \leftarrow) \end{align*} $$ Hence, $X^* = y^*$, which means the fixed point is unique.

- Existence

-

Extend

In the theorem, why $g'(x)$ cannot less than -1? In the graph below we can find that even if g’(x) < -1, the fixed is still unique.

THIS IS FALSE, because it would never satisfy $g(x) \in (a ,b)$.

By the Mean Value Theorem, $\exists \ c \in (a ,b) \ s.t. $

Algorithms: Find fixed point

def fixed_point(f, x_0, tolerance = 1e-10, max_iteration = 100):

x = [x_0]

for i in range(1, max_iteration+1):

x.append(g(x[k-1]))

if abs(x[k] - x[k-1]) < tolerance:

return x[k]

return x[-1]

- Theorem

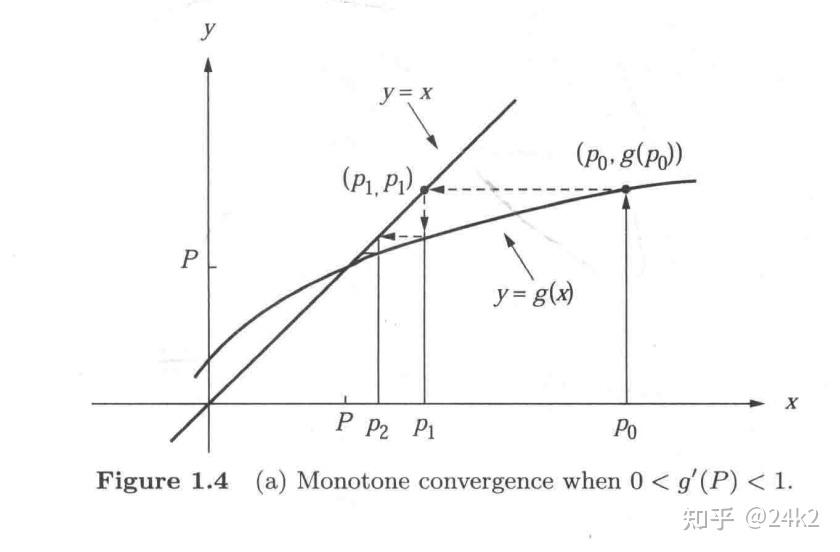

$\text{If }f \in C[a ,b] \ , \ g(x) \in [a ,b]\ , \forall x \in (a ,b)\ , \ |g'(x)| \le k < 1\ ,\text{ for some } k \in \mathbb{R} \text{, then for any } x_0 \in (a ,b) \text{, the sequence }\{x_n\}_{n = 1}^{\infty} \text{ generated by } x_n = g(x_{n-1}) \ , \ n \ge 1 \text{, converges to the unique fixed point in }[a,b].$

-

proof

$$\begin{align*} |x_n - X^*| &= |f(x_{n-1} - f(X^*))| \quad \text{(by MVT)}\\ &= |g'(\xi) (x_{n-1} - X^*)| \quad \xi \in (x_{n-1} ,X^*) \\ &\le k|x_{n-1} - X^*| \le k^2|x_{n-2} = X^*| \le \cdots \le k^n|x_0 - X^*| \\ \end{align*}$$

For $n > 0$ , $X^*$ is a fixed point

$$ \lim_{n \rightarrow \infty} k^n = 0 \Rightarrow \lim_{n \rightarrow \infty} |x_n - X^*|=0 \quad \text{ Hence, } \{x_n\} \text{ converges to } X^* $$ -

Cor: The error bound

$$ |x_n-X^*| \le k^n \text{, max}(x_0-a, b-x_o) \text{ and } |x_n - X^*| \le \frac{k^n}{1-k} |x_1 - x_0|$$

Error Analysis

- Theorem: convergent rate for fixed point iteration

$$\begin{align*} &\text{Let } g \in C[a ,b] \text{, } g(x) \in [a, b] \text{, } \forall \ x \in [a ,b]. \text{ If } |g'(x)| \le k < 1 \text{ and } g'(x) \neq 0. \\ &\text{Then, for any } x_0 \in [a ,b], \text{ the fixed point sequence } \{x_n\}_{n=1}^{\infty} \text{ with }\\ & x_n = g(x_{x_{n-1}}) \text{ will converge linearly to unique } X^* \end{align*}$$

- proof

$$\begin{align*} &\because x_{n+1} - X^* = g(x_n) - g(X^*) = g'(\xi)(x_n - X^*) \quad \text{(MVT)} \\ &\text{Since } g'(x) \text{ is continuous on } (a ,b) \text{, } \lim_{n \rightarrow \infty} g'(\xi) = g'(X^*)\\ &\text{Thus, } \lim_{n \rightarrow \infty} \frac{|x_{n+1} - X^*|}{|x_n - X^*|} = \lim_{n \rightarrow \infty} |g'(\xi)| = |g'(X^*)| \le k \Rightarrow \alpha = 1 \text{, } x = g'(X^*) \\ &\text{Hence, } \{x_n\} \text{ converges linearly to } X^* \end{align*}$$

Newton’s method

If $f \in C^2[a ,b], \ x_0 \in (a,b)$, the solution $X^* \in (a ,b) \text{ and } f(X^*) = 0$. Assume $x_0$ is close to $X^*$ by Tylor’s expansion.

$$ f(X^*) = f(x_0) + f'(x_0)(X^* - x_0) + \frac{f''(\xi)}{2!} (X^* - x_0)^2, \quad \xi \in (x_0,X^*)$$

$ \because |x_0 - X^*| \text{ is small} \quad \therefore |x_0 - x^*| \rightarrow 0$

$ \because f \in C^2[a ,b] \quad \therefore |f''(\xi)| \text{ is bounded.}$

Algorithms

import math

def d(f, x):

h = 1e-6

return (f(x + h) - f(x - h)) / (2 * h)

def f(x):

return math.log(x) - 1

def newton_method(f, a, tolerance):

res = [a]

res.append(res[0] - f(res[0]) / d(f, res[0]))

iteration = 1

while (abs(res[iteration] - res[iteration-1]) >= tolerance or abs(f(res[iteration])) >= tolerance):

current_x = res[iteration]

next_x = current_x - f(current_x) / d(f, current_x)

res.append(next_x)

iteration += 1

print(f"Iteration {iteration}: x = {res[iteration]:.6f}, f(x) = {f(res[iteration]):.6e}")

return res[iteration]

final_root = newton_method(f, 4, 1e-6)

print(f"Found root: {final_root:.6f}")

- Theorem

$\text{If } f \in C^2[a ,b], \ \exists \ X^* \in [a ,b] \text{ s.t. } f(X^*) = 0 \text{ and } f'(X^*) \not= 0 \text{ then } \exists \delta > 0 \text{ s.t. } x_0 \in (X^* - \delta , X^* + \delta) ,\{x_n\}_{n=1}^{\infty} \text{ converges.}$

-

proof

$$g'(x) = 1 - \frac{f'(x)f'(x) - f(x)f''(x)}{f'(x)^2} = \frac{f(x)f''(x)}{f'(x)^2} \text{ and } g'(X^*) = \frac{f(X^*)f''(X^*)}{f'(X^*)^2} = 0$$$$\begin{align*} &\because g'(x) \text{ is continuous around } X^* \text{ and } \exists \ k \in (0, 1) \text{ s.t. } |g'(x)| \le k < 1, \forall x \in [X^* - \delta_1, X^* + \delta_1], \\ &\text{ for some } \delta_1 > 0.\text{ Let } \delta = min(\delta_1, \bar{\delta}),\ |g(x) - g(X^*)| = |g'(\xi)||X^* - x| \text{ (MVT), where $\xi \in (X^*, x)$} \\ &\therefore |g(x) - X^*| = |g(x) - g(X^*)| \le k|x-X^*| < |x-X^*| \end{align*}$$

$\text{Let } g(x) := x - \frac{f(x)}{f'(x)}$

$\because f' \text{ is continuous and } f'(X^*) \not= 0$

$\therefore f'(x) \not= 0, \forall x \in (X^* - \bar{\delta}, X^* + \bar{\delta})$

Hence, $g(x)$ is continuous on ($X^* - \bar{\delta} ,X^* + \bar{\delta}$) andHence, the function $g(x)$ maps $[X^* - \delta_1, X^* + \delta_1]$ onto $[X^* - \delta,X^* + \delta]$. By the fixed point theorem, the sequence $\{x_n\}_{n = 1}^{\infty}$ generated by $x_n = g(x_{n-1}),\ n \ge 1$ will converge to $X^*$ for any initial value $x_0 \in (X^* - \delta, X^* + \delta)$.

Error Analysis

- Theorem: convergent rate of Newton’s method